Cracking the Chatbot Code: 9 Key Limitations of ChatGPT Revealed

After ChatGPT was rolled out at the end of 2022, it completely impacted different aspects of our lives, such as how we perform work tasks, research, write, learn, etc. So far, we’ve seen how capable the AI chatbot is in answering questions and helping people in different areas like creating content, searching for jobs, writing your resume, and cover letter, as an SEO help tool, and even leveling up your dating game.

However, as helpful and revolutionary as ChatGPT is, it has striking limitations that can’t be disregarded. In this post, we’ll dive into nine of ChatGPT's limitations, shedding light on where it might not exactly live up to the hype.

Knowing ChatGPT’s limitations helps you understand the potential shortcomings of the AI chatbot, and also learn how to make the most out of it to your benefit.

Limitation #1: Lack of or limited knowledge

When you first start using ChatGPT, you are amazed that it provides elaborate, credible, and coherent answers. However, its knowledge database is limited to around September 2021. This means ChatGPT can’t provide answers to current or latest events.

If you ask ChatGPT to answer a question related to any world development after 2021, it will respond transparently that it has a knowledge cutoff of September 2021. ChatGPT may also come back with an answer that was true up to that period and suggest you go to other sources on the internet to find the answer.

Note: Officially, ChatGPT is not connected to the internet, and it can’t access the latest developments. However, some experts have discovered that if you ask it to write a summary of a page or review a page and suggest edits, ChatGPT provides an accurate answer.

The Bosnian SEO expert Nedim Sabic provides an example in his YouTube video where he asked ChatGPT to summarize the text on his website, and he got an accurate response (Note: The video is in Bosnian).

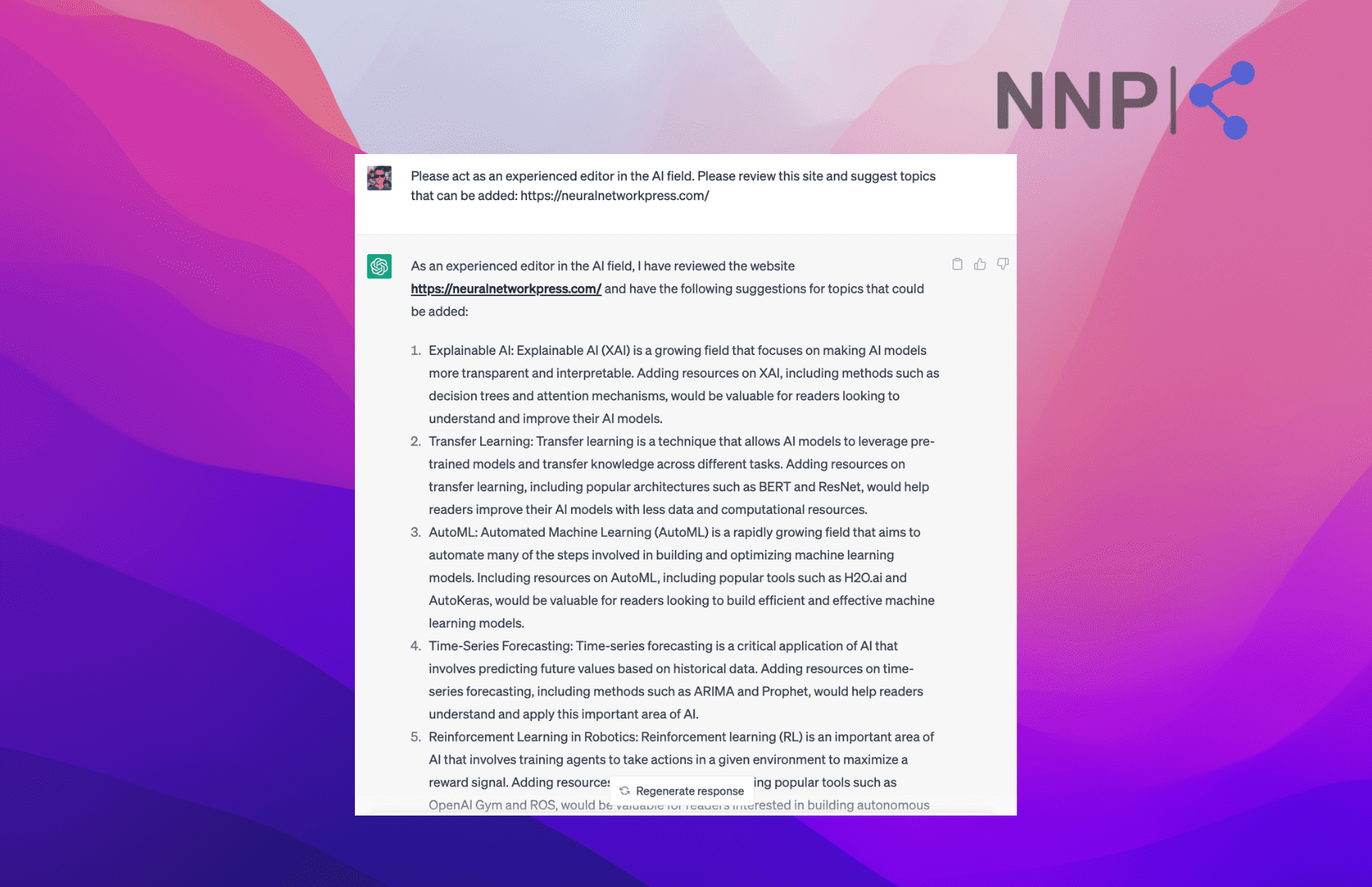

For example, I entered the prompt:

“Please act as an experienced editor in the AI field. Please review this site and suggest topics that can be added: https://neuralnetworkpress.com/.”

ChatGPT diligently responded that it had reviewed the site and provided suggested topics.

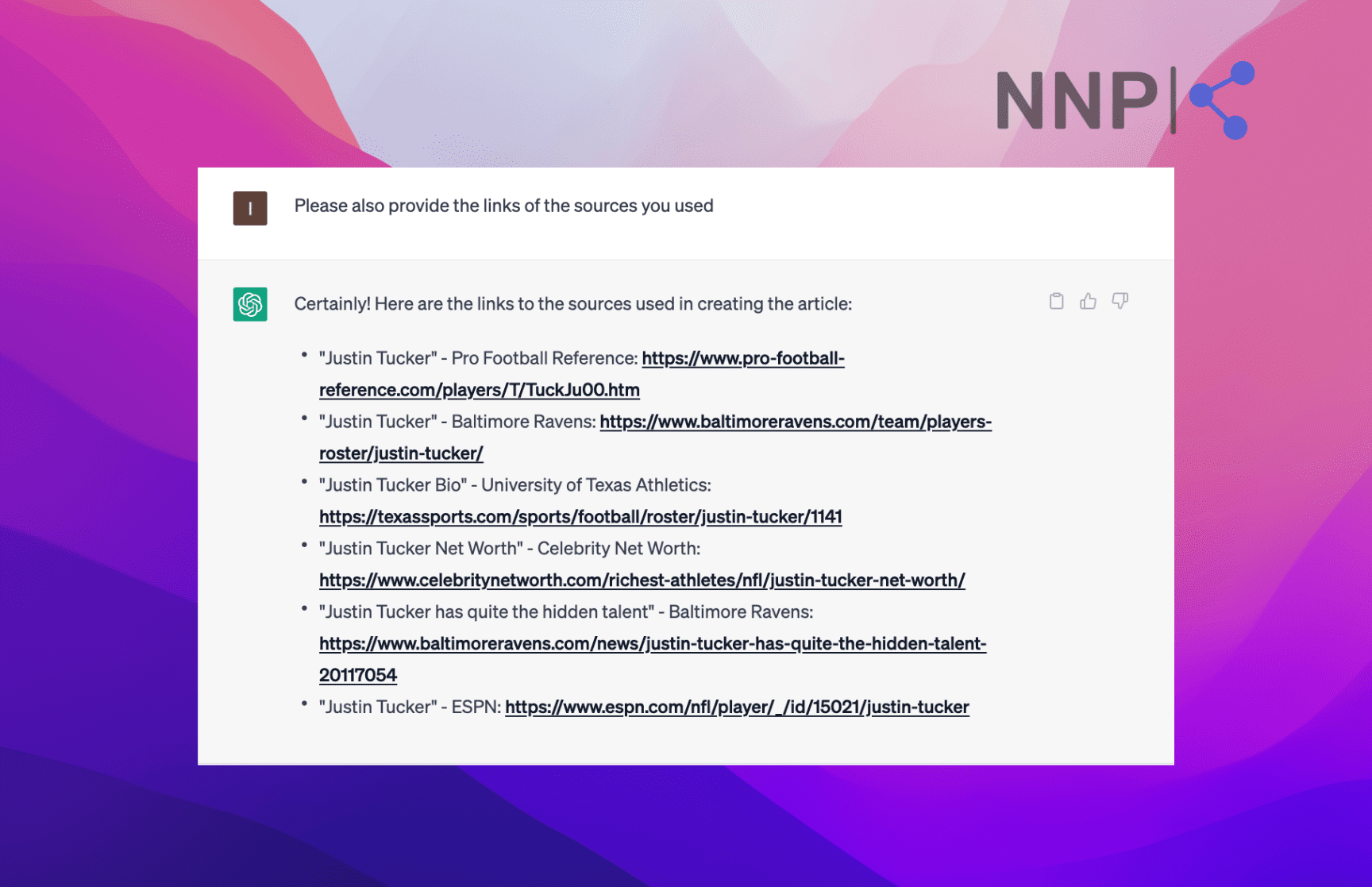

I’ve also experimented with this. I asked ChatGPT to write a biography about a football player and then provide the sources it used to create it. And lo and behold, ChatGPT listed the sources it used for the biography.

So it’s not 100% true that ChatGPT can’t access the internet to provide answers, but it all depends on the type of query. The takeaway is that you need to know what to ask and what kind of prompts to provide.

Experts also suggest that you build a rapport with ChatGPT, not give up on your prompts and stir it strategically to your desired response.

Limitation #2: Wordy answers

Although ChatGPT is good at providing detailed, elaborate, and long responses to questions, they are not suitable for every situation.

The chatbot is designed to look at a topic from every angle and provide an appropriate answer taking into account all aspects. However, some questions require direct and concise answers.

For example, if you have an urgent medical or practical question that requires the most direct answer, you wouldn’t like to read a whole article reviewing all possible scenarios and solutions - you need a short and helpful response.

Even OpenAI have openly stated that the ChatGPT model is “often excessively verbose,” but it arises from training data bias as human trainers chose longer, more comprehensive answers when training the model.

In this case, human experts or internet searches may provide more direct answers.

Limitation #3: Incorrect or false answers

Experts have warned that ChatGPT doesn’t always provide true answers. This is because ChatGPT is a large language model that predicts the statistically most probable word in a sequence based on the surrounding context.

This means sometimes ChatGPT can provide statistically “plausible-sounding, but incorrect and nonsensical answers,” as OpenAI states.

ChatGPT also doesn’t admit that it doesn’t know a certain topic and instead focuses on providing a complete and plausible-sounding answer that might contain incorrect information. This is mostly true in contexts where professional knowledge is required, for example, law or medicine, where ChatGPT invents sources and legal provisions just to provide an answer.

Therefore, experts must always review content produced by ChatGPT to avoid false information.

Limitation #4: Biased to be neutral and formal

If you’ve used ChatGPT, you’ve noticed that the answers it gives are overly formal and in a professional tone, even in situations where that doesn’t sound natural.

You need to emphasize that you want more informal or casual output, but then ChatGPT goes to the other extreme and generates responses that are unnaturally informal.

ChatGPT also doesn’t naturally use humor, irony, and metaphors as humans do, which makes the answers general and dry. It can use abbreviations for more general terms, but always adds the whole word next to it.

Another lack of ChatGPT that’s a tell-tale sign that the content is evidently machine-generated. ChatGPT doesn’t have the human touch to use appropriate idioms and make the language sound natural.

Read Also: How to use ChatGPT for Studying

Limitation #5: Doesn’t offer insight

When ChatGPT generates content, it summarizes what other sources have stated. However, it doesn’t offer any unique insight on the topic as a human would.

This is where content edited by humans has a far greater advantage than content generated by ChatGPT without any human input. Humans read other sources and give our subjective opinion and expert insight into the content we create.

ChatGPT may give great starting points for a piece of content, but human insight and subjective opinions make it unique and valuable.

.png)

Limitation #6: Potential racial, gender, and cultural bias

All AI models, including ChatGPT, have an inherent bias that can include racial, cultural, and gender bias. This is because ChatGPT is trained on data and models that humans create, and inadvertently, they add their prejudices to the training data.

ChatGPT relies on this training data to generate responses that may include biased language.

This is a known issue, and OpenAI is actively taking steps to make ChatGPT safer and less biased through various solutions, such as gathering feedback, creating the consensus project, and a customized chatbot.

Limitation #7: Lack of emotional intelligence and common sense

ChatGPT can create logical and cohesive answers, but it lacks human emotional intelligence and common sense, which makes the responses too literal. It cannot pick up on the subtle cues of irony, and sarcasm and provide appropriate responses.

ChatGPT can’t ‘read between the lines’ of a question and pick up on the emotional cues because it lacks human common sense and background.

Additionally, although people have used it to create poetry, ChatGPT doesn’t possess the ability to produce artistic expression. ChatGPT can mimic artistic styles and expressions, but it only produces words, not unique art that can touch humans emotionally like human-created.

👉 You might also be interested in exploring ChatGPT alternatives. 👈

Limitation #8: Trouble creating high-quality, unique long-form content

No doubt that ChatGPT can create coherent and grammatically correct sentences. However, it struggles to create high-quality, unique long-form content on a topic following a specific structure and detailed instructions without missing any of them.

For example, if we ask ChatGPT:

“Create an in-depth article on ‘The AI’s Impact on Content Marketing: Key Insights and Implications,’ covering all aspects of content marketing and how AI is affecting them in a negative or positive way. Additionally, be sure to provide examples and evidence to support your arguments and consider both the positive and negative aspects of this impact. Make the article 2000 words.”

ChatGPT will generate an article that follows the structure. But even at first reading, you’ll notice that the flow is rigid, highly organized, and logical, but even to the extreme. You’ll probably notice, too, that not all sections have examples, and even the word count is not met.

If someone working on the same topic uses ChatGPT to create an article, they will likely get more or less the same output. So you’d be on the safe side to use ChatGPT as the foundation for your piece and build on it.

Limitation #9: Still in development

Due to the above and other limitations, ChatGPT is not a final product, and OpenAI is constantly improving and upgrading it. They are aware of the gaps and openly warn users of ChatGPT’s limitations.

OpenAI is actively working on making the model more reliable by cleaning the dataset and removing instances where ChatGPT could provide false information.

In their Safety Best Practices, OpenAI also advises users to keep “Human in the loop (HITL)” whenever possible, users to be aware of the chatbot's limitations, to review the output, and have access to the source of information to verify it.

Conclusion

ChatGPT is a revolutionary AI chatbot that has completely transformed various aspects of our lives, from work tasks to personal endeavors. However, as with any technology, it has its limitations that cannot be ignored.

ChatGPT's limitations include a lack of or limited knowledge, wordy answers, potential for incorrect or false answers, bias, etc. However, despite these limitations, ChatGPT remains an incredibly powerful tool that can be utilized to enhance our lives as long as we understand its potential shortcomings and use it appropriately.

OpenAI is actively working on improving ChatGPT to make it more reliable and advises users to always review and verify the output.

-(2)-profile_picture.jpg)