From Letters to Lights, Camera, Action: Text-to-Video AI Generation

You know how AI is pretty much-changing everything we know about the world, right? Self-driving cars, voice assistants that almost seem human, you name it. However, one frontier that AI is currently shaping with unprecedented excitement is the realm of text-to-video generation.

This powerful concept allows us to transform mere strings of text into a series of moving images, enabling a seamless journey from a writer's mind to the viewer's screen. Imagine typing a script or a story on your computer, and then watching as an AI tool magically brings your words to life as a video. This is no longer a subject of science fiction. Instead, it is a rapidly emerging reality, where prose is pixelated, and narratives get a new dimension.

Through this article, we aim to journey into this fascinating world of text-to-video AI generation. We'll delve into the underpinnings of how these AI models work, the tools that are leading the charge, and the transformative potential of these advancements in storytelling and content creation.

What is a text-to-video generation?

Text-to-video AI generation is an advanced area of artificial intelligence that deals with the automatic creation of video content from written text. In essence, this technology can take a piece of written text, understand its context, and generate a video that visually represents the ideas and narratives within the text.

This process involves several different areas of AI, including natural language processing (NLP), computer vision, and machine learning.

Natural Language Processing helps the AI understand the content, context, and structure of the written text. It allows the AI to interpret the text much like a human would, understanding not just individual words but also sentences and the relationships between different pieces of information.

Computer Vision and Machine Learning techniques are then used to create the video. These might include object detection and recognition (to understand what objects need to be in the video), image synthesis (to generate images from scratch that match the text), and temporal modeling (to understand how the video should change over time).

🔎 Peep under the AI image generators' hood and explore how they work.

Text-to-video tools

Now that we have understood the workings behind text-to-video AI, let’s meet the key players in this exciting field. These are the tools and technologies that are making it happen - the rockstars of text-to-image AI.

Runway’s Gen-2

Runway, a Google-backed AI startup and the creator of the Stable Diffusion image generator, recently created a buzz in the generative AI community.

In early June 2023, the AI startup launched the Gen-2 - a model that generates videos from text or images. Runway’s Gen-2 model is the descendant of their previous model Gen-1 released in February 2023. However, Gen-2 makes headlines as the first “commercially available” text-to-video model, unlike many other models still in the research phase.

https://www.youtube.com/watch?v=jWXiMBwkfwc

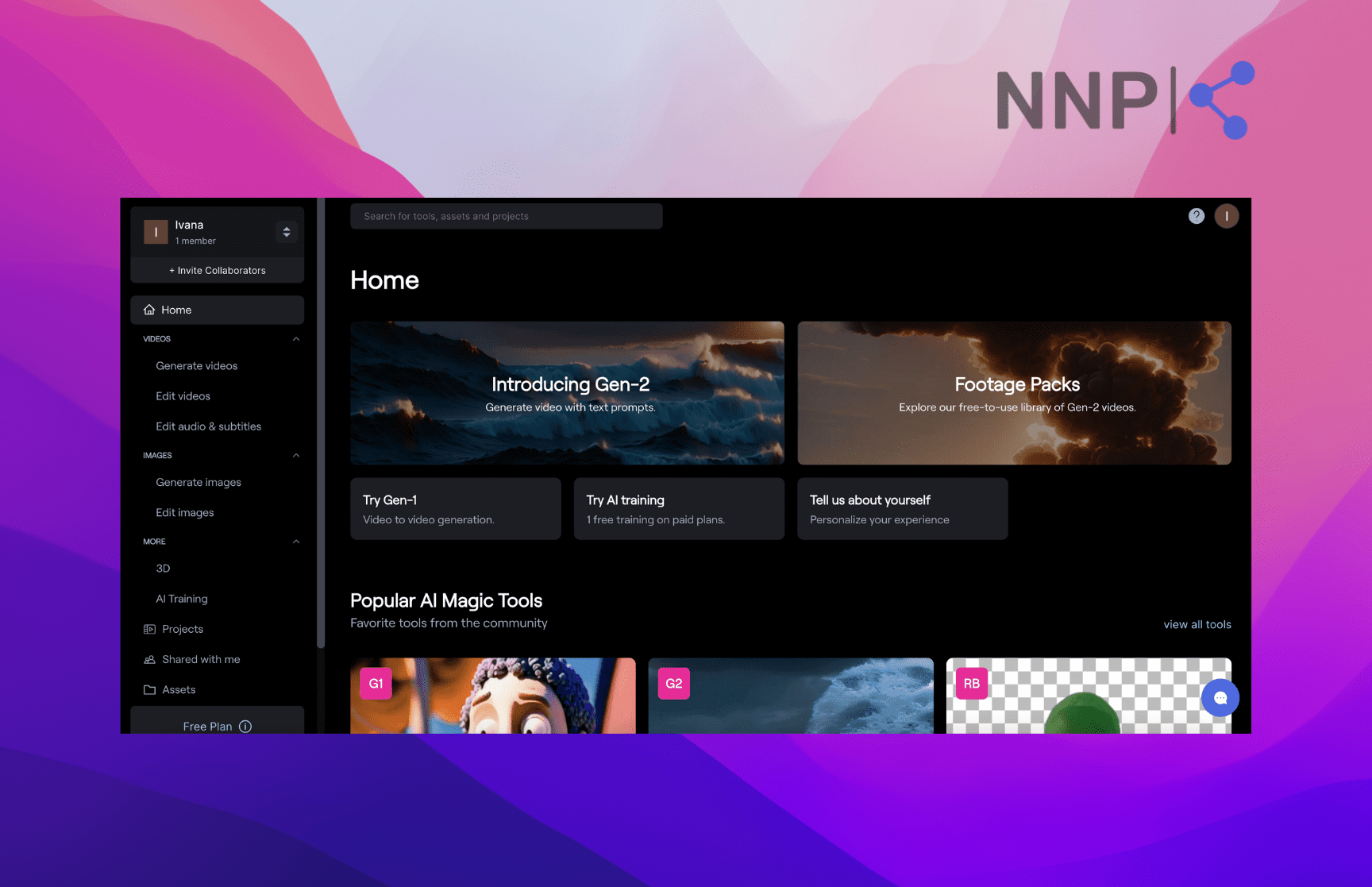

Runway’s platform has the usual interface as image generators. Signing up and accessing the video generation dashboard from the Runway home page is fairly easy. When you enter the app, you can easily find the option to generate videos with a text prompt or by uploading an image; you can choose to use Gen-2 or the previous Gen-1.

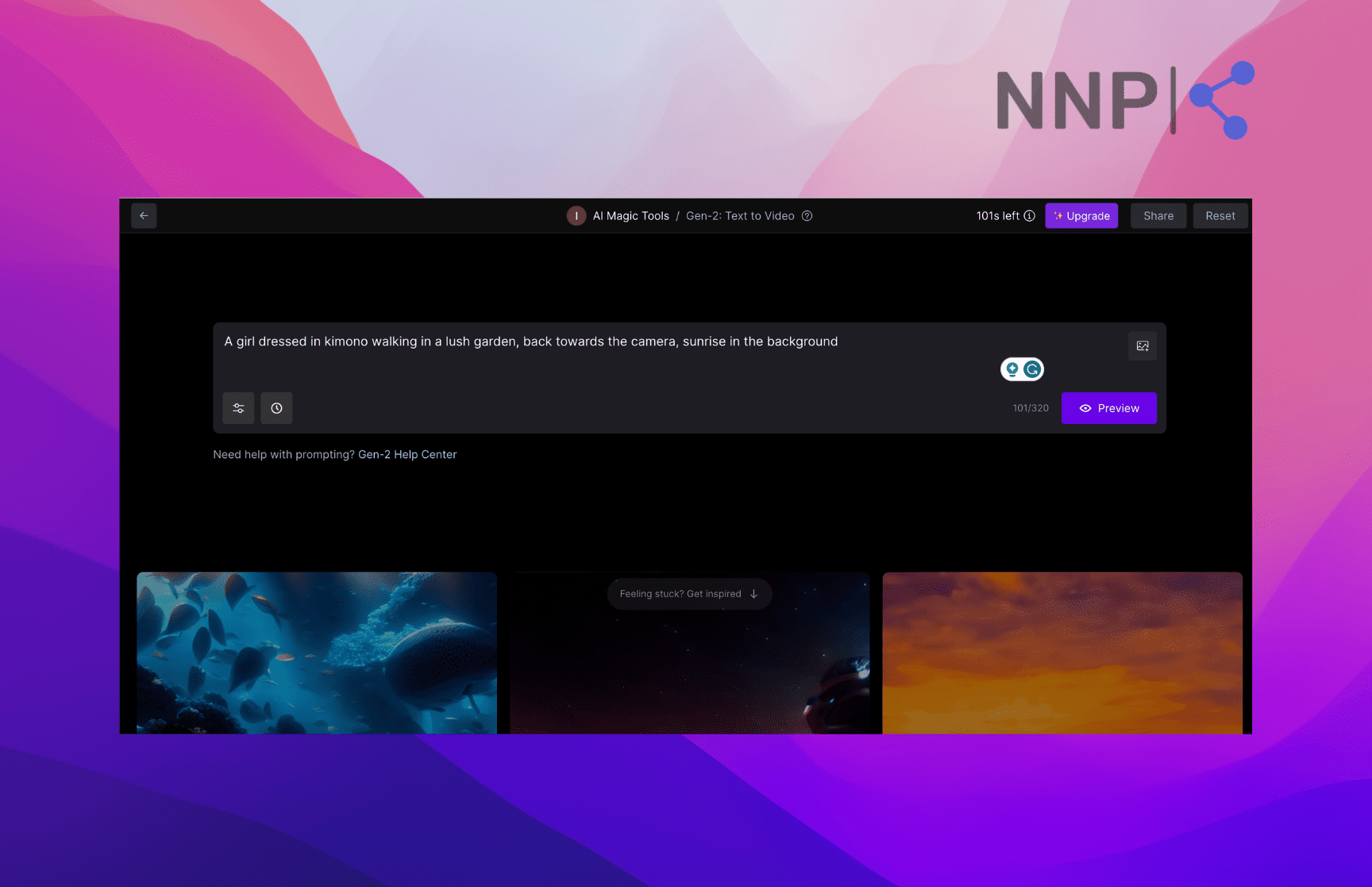

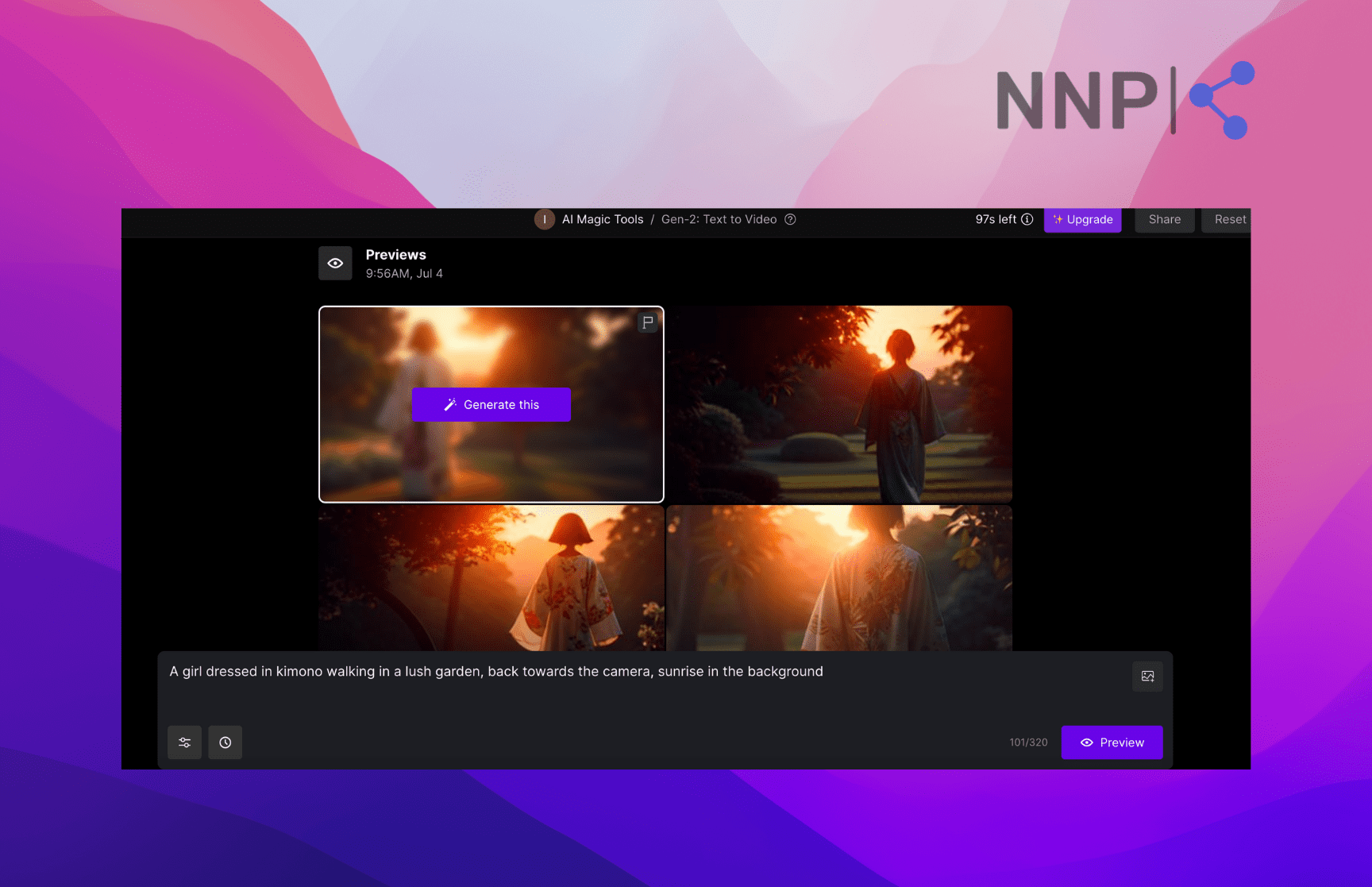

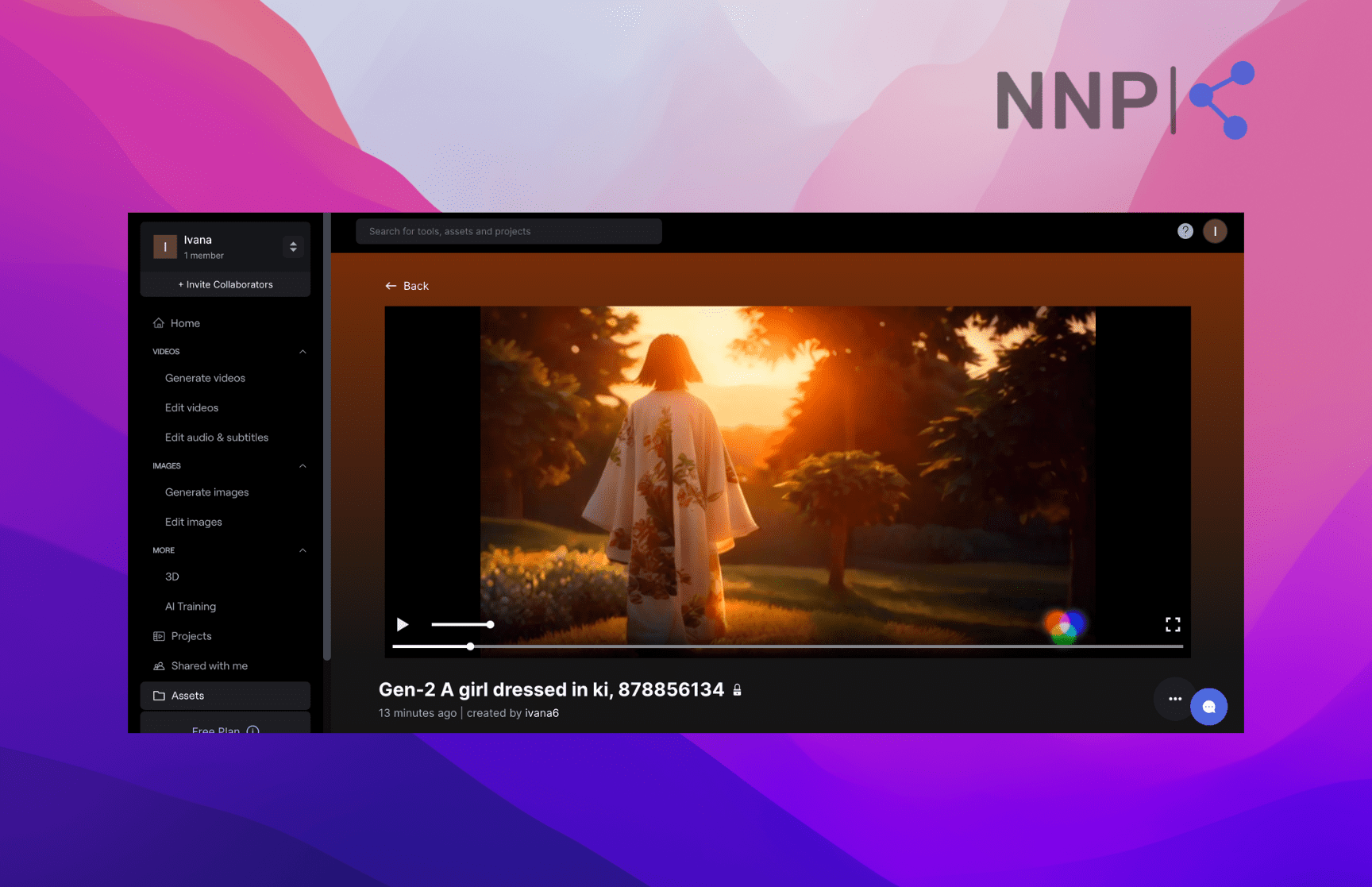

For the sake of this article, I tried Gen-2 to generate a video with a text prompt. After entering the prompt, you have the option to preview 4 image variations and choose 1 to generate a video. It takes up to several minutes for Gen-2 to generate the video.

The free Runway version offers 101 seconds of video generation. If you want to get more seconds, and generate videos faster, you can upgrade your plan and get additional benefits, such as shorter wait time, upscale resolution, removed watermark, unlimited video editor projects, more asset storage space, and more.

However, as with all other pioneering AI breakthroughs, Runway’s Gen-2 videos leave much space for improvement. Users that have tested Gen-2 point to some gawking shortcomings.

Kyle Wiggers from TechCrunch points out several limitations of Gen-2. The first and most obvious is the 4-second frame, which gives off a slide-show-like look. He also highlights the fuzziness and graininess of the AI-generated videos. Another Gen-2 shortcoming is its inconsistency with human anatomy and physics, customary to many AI models. Kyle points out that Gen-2-generated videos contain human faces that look doll-like, glossy, emotionless eyes, with pasty skin that looks like plastic.

Nevertheless, we have to give Runway some credit for breaking new frontiers in text-to-video AI generation. Gen-2 is still an amazing breakthrough in generative AI, and is only the beginning of AI-video models and what they’ll be capable of in the future.

More importantly, Gen-2 helps people create videos only with their imagination and a computer, without the need for fancy equipment, expensive cameras, and painstaking editing - just like Runway’s slogan says, “No lights. No camera. Just action.”

After all, Gen-2 may not be taking on the Hollywood blockbusters or putting animators, CGI artists, and filmmakers out of jobs (thank God!), but it can be used to create some amazing videos. Check out these 12 incredible videos generated with Gen-2:

https://twitter.com/heyBarsee/status/1651961767810179072

🚀 If you are looking to create AI images, check out how Leonardo AI works and find out example prompts to use.

Vimeo’s AI-powered video editor

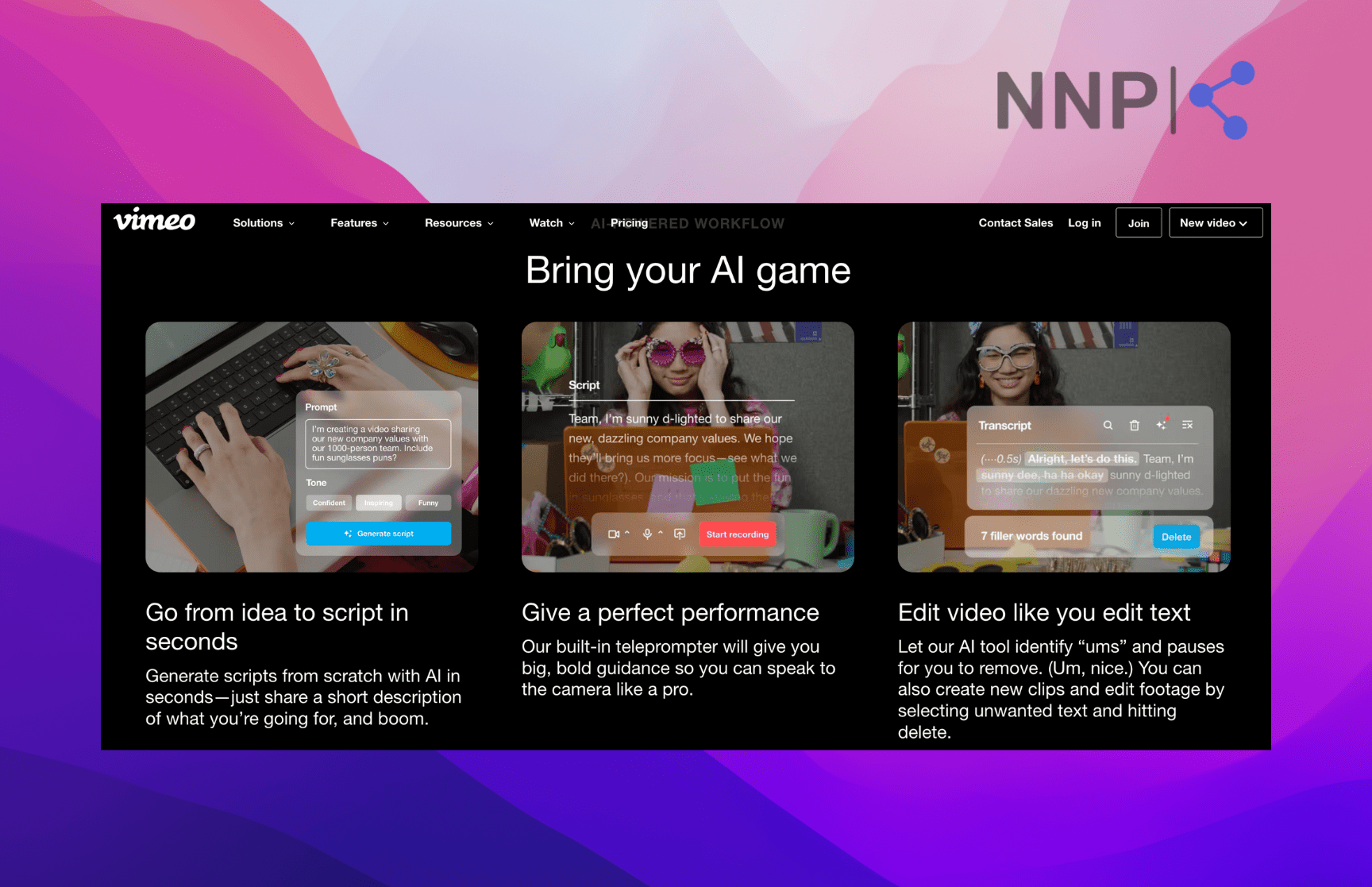

The video platform Vimeo announced it integrates AI-powered editing features on its platform. Vimeo’s AI tool suite is already available in their Standard Plan from July. Vimeo states that its AI features will allow customers to create a complete video in a matter of minutes - everything from creating scripts, making reels, and announcement videos to extracting quotes for marketing snippets to hosting virtual events and meetings.

Image source: Vimeo

Image source: Vimeo

In essence, Vimeo added three new features to its service:

- AI script generator that creates scripts based on text prompts, including parameters such as tone and length.

- Built-in teleprompter that allows users to record footage and adjust font size and timing.

- AI-text editor that automatically detects long pauses, filler words, and awkward breaks such as ‘ah’ and ‘um.’

The new Vimeo features aim to break the barrier to entry-level video creators or non-skilled users who want to create videos, but don’t have the necessary video editing skills and knowledge.

Although Vimeo doesn’t offer to create a video with a text prompt from scratch, its AI features help users in crucial aspects of the video-making process.

The new AI capabilities are similar to what Adobe did with their Generative Fill feature, which allows users to add, extend, or remove content from images using simple text prompts without damaging the original image.

Although video is the most effective method to communicate a message, video production sets a barrier for people to use it most effectively. Vimeo tries to make video editing easy and bring the process closer to all video creators.

Google’s Dreamix

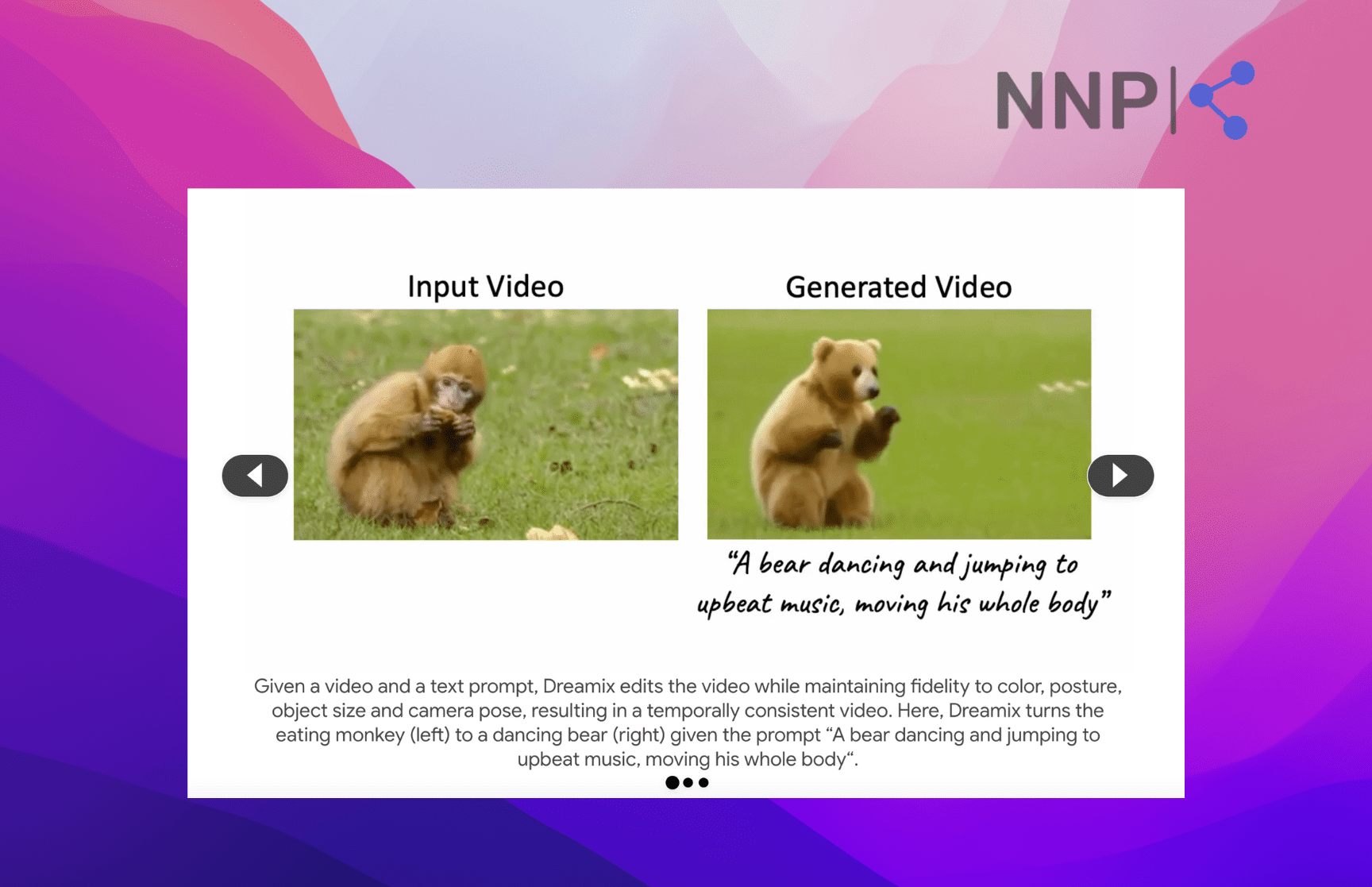

Google’s Dreamix is a cutting-edge AI video editor rooted in diffusion techniques. It has the power to alter existing videos guided by text prompts or even craft entirely new videos from just one existing image.

Image source: Dreamix

Image source: Dreamix

This innovative video editor is a major leap forward in the realm of AI, advancing the achievements of generative AI models like OpenAI's DALL-E 2, Stable Diffusion, and Google's own Imagen, all of which convert text descriptions into tangible images or videos. While earlier methods like Prompt-to-Prompt or InstructPix2Pix use Stable Diffusion for image processing, AI video models are typically restricted to synthesis. Dreamix is breaking this limitation by offering video editing features.

Here's how Dreamix works: it infuses source images with what is called 'noise,' which are then run through a video diffusion model. This model takes the modified source images and produces new ones, guided by the provided text prompts.

These fresh images are then strung together into a video. The source images essentially serve as a loose blueprint, capturing the basic form or motion of an object but still allowing for changes.

And Dreamix doesn't stop at editing existing videos - it's also capable of generating completely new ones. It can either tweak a single image in various ways and assemble them into a video or use several images of a certain object to create a video centered around a theme.

While there's potential for improvement in quality and computational efficiency, Google's main goal with Dreamix is to push forward research on tools that enable users to breathe life into their personal content.

✨ Explore 8 Midjourney alternatives for generating AI images.

Other popular AI video generators

Besides Runway and Vimeo, there are a plethora of other AI video generators that enable you to create fascinating videos based on text. Here are some of them:

Make-A-Video: A recent addition from Meta, Make-A-Video, allows users to generate humorous mini-films from a handful of sentences. Leveraging the latest in text-to-image generation technology, the tool also incorporates pictures and additional videos into the video-making process.

VEED.io: VEED.io combines robust AI capabilities with an intuitive interface to simplify the video creation process. It doubles as an all-in-one video editor, allowing users to trim, crop, and add subtitles, among other features.

Lumen5: With over 800,000 users, Lumen5 is an exceptional online tool for AI video creation. This AI-powered tool streamlines the production of top-tier video content, enabling quick video generation from scratch or based on existing content.

Designs.AI: A top-tier AI-driven content creation platform, Designs.AI, has the ability to turn written content like blog posts and articles into compelling videos. It can also expedite the design process for logos, movies, and banners.

Synthesia.io: Synthesia is a standout AI video generator that enables users to create lifelike AI videos in mere minutes. It's an excellent choice for those seeking cost-effective yet professional-grade videos.

InVideo.io: InVideo.io is a robust video editing tool that can transform text into visual content. It offers over 5000 layouts, iStock media, a library of music, and more. The platform boasts a wealth of AI-powered themes for the simple conversion of text-based info into video content.

DeepBrain AI: This tool is among the top contenders in the AI video generation space, with AI Studios from DeepBrain leading the pack. The platform is renowned for its hyperrealistic avatar and stands out for its superior quality among video SaaS powered by AI. With a script and a few extra features like a chroma key function and a ppt to video function, you can generate a video with ease.

ModelScope: Developed by a subdivision of the tech titan Alibaba, ModelScope is a diffusion model-based text-to-video generator. Though it's not groundbreaking, it's known for producing quirky videos that quickly go viral as memes.

Deforum Stable Diffusion: Deforum is a unique AI generator that creates animations by taking into account previous frames. Utilizing Deforum SD, users can seamlessly create fluid films and animations from Stable Diffusion outputs.

Conclusion

In the rapidly changing world of AI, the frontier of text-to-video generation is drawing widespread attention and excitement. Text-to-video AI generators transform simple lines of text into moving visuals, bringing the written word to life on screen.

Leading the charge are tools such as Runway's Gen-2, Vimeo's AI-powered video editor, and Google's Dreamix. Gen-2, described as the first "commercially available" text-to-video model, creates videos from text or an image, breaking new ground despite some limitations in video quality and realism. Vimeo, meanwhile, is catering to entry-level video creators, introducing AI features such as an AI script generator, a built-in teleprompter, and an AI text editor to help ease the video-making process.

Dreamix, on the other hand, is a game-changer, with the ability to alter existing videos or create completely new ones from a single image. Despite areas for improvement, its introduction signifies a significant step in AI, driving research on tools that allow users to animate their own content.

We also mentioned some of the notable players in the landscape of AI video generators. Each of these platforms offers unique capabilities and contributes to a future where creating engaging video content becomes increasingly accessible to anyone armed with imagination and a computer.

-(2)-profile_picture.jpg)